Experience Lab

The Experience Lab is a research and experimentation environment focused on understanding how people experience products, environments, and services. It is a place where human perception, emotion, and behavior are studied in a controlled and measurable way.

At its core, the lab is built around the idea that experiences are shaped by emotions. These emotions influence how people remember interactions, how they make decisions, and ultimately how they value products and services.

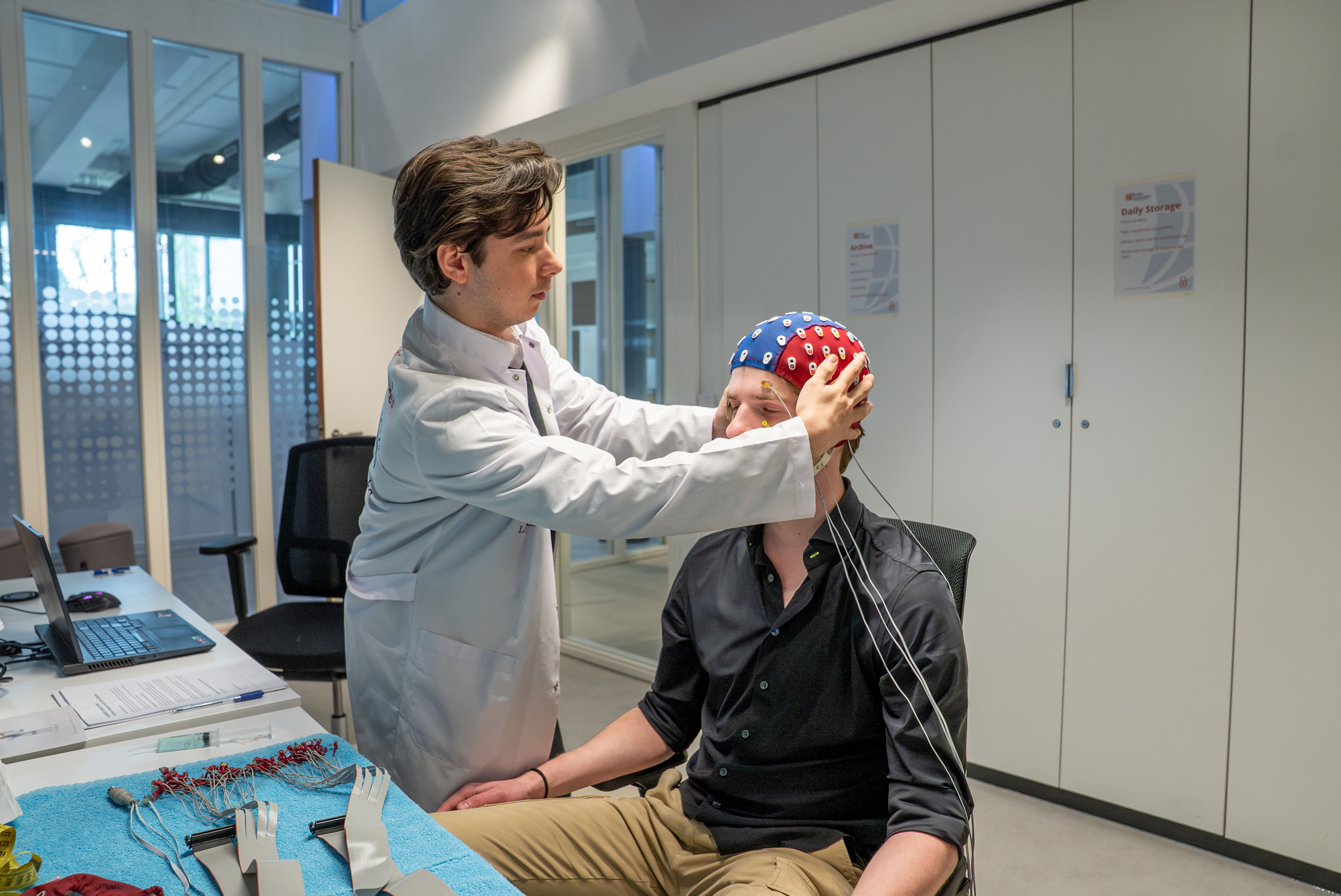

The lab provides access to advanced measurement technologies that capture physiological and cognitive responses. This includes electroencephalography (EEG) to measure brain activity, as well as wearable sensors that track signals such as heart rate, skin conductance, and movement.

Experiments can be conducted both in controlled lab settings and in real-world environments. For example, experiences can be simulated using virtual reality, or measured in the field using wearable devices during live events, museum visits, or transportation scenarios.

This combination of controlled experimentation and real-world measurement allows researchers and students to study experiences as they unfold over time. By linking physiological signals to behavior and perception, the lab enables a deeper understanding of how people interact with systems, environments, and technologies.

What Our Students Are Building

Our students use the Experience Lab for a wide range of research-driven projects. Some carry out their graduation projects, while others work on part-time research or applied assignments.

Ionut Botoroga, a fourth-year ADS&AI student, is currently working on a graduation project in collaboration with the Experience Lab, focusing on brain–computer interfaces (BCIs) for controlling robotic systems.

His project addresses a key limitation in current BCI systems: the difficulty of reliably translating brain signals into complex actions. Traditional approaches rely heavily on motor imagery, which limits the number of distinct commands that can be detected.

To overcome this, he is developing a hybrid approach that combines different types of mental activity. For example, specific commands are linked to distinct cognitive tasks such as mental arithmetic or spatial reasoning, allowing the system to better distinguish between user intentions.

The system is built on a full AI pipeline, including signal preprocessing, feature extraction, and deep learning models that analyze temporal patterns in brain activity. Data is collected using a 64-channel EEG setup in the Experience Lab, providing high-resolution measurements of neural signals.

The goal is to enable more expressive and precise control of robotic systems, moving from simple commands toward multi-dimensional interaction. As a proof of concept, the system will be evaluated in a controlled environment, with potential applications in assistive robotics.

Through this project, Ionut is combining machine learning, signal processing, and experimental design in a real research setting. He is not only developing models, but also designing experiments, collecting human data, and evaluating system performance under realistic conditions.

Projects like this reflect the role of the Experience Lab in our program: enabling students to work on complex, interdisciplinary problems where AI interacts directly with human behavior.